You’re standing in the lot after finals, staring at the recap sheet. Your corps nailed the drill. The forms were clean. Everyone hit their dots. But the visual ensemble score didn’t match what you felt on the field. Understanding how judges actually evaluate visual ensemble can transform how you approach every rehearsal block, every rep, and every performance.

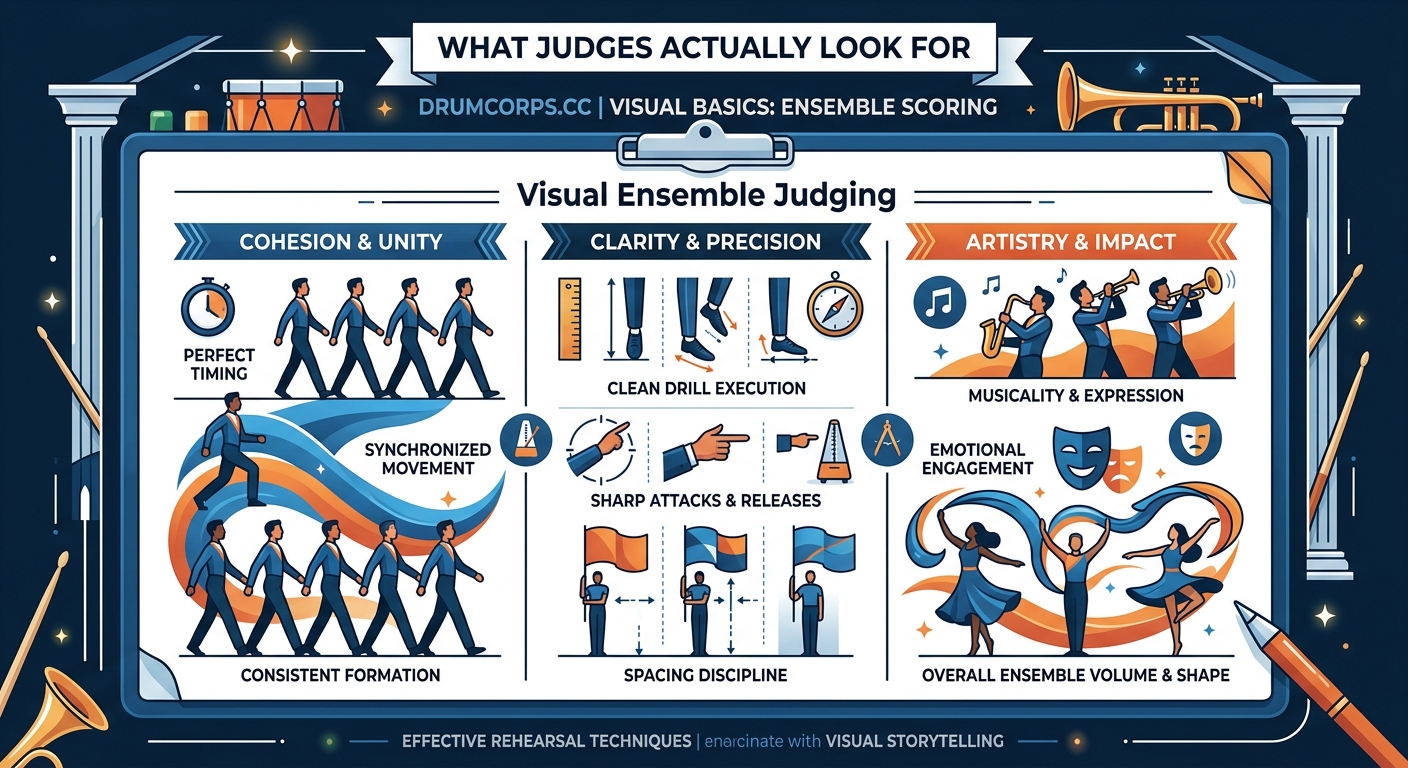

Visual ensemble judges score based on achievement and effect. Achievement measures technical execution like body alignment, foot timing, and spacing accuracy. Effect evaluates the visual impact, including energy, dynamics, and how well movement supports the musical program. Judges award points from 0 to 20, with decimals, based on comparative excellence within each competitive class.

Understanding the Visual Ensemble Caption Structure

Visual ensemble sits at the heart of competitive scoring in drum corps. Unlike individual visual performance, which focuses on personal technique, visual ensemble judges evaluate the entire performing body as a single unit.

The caption typically divides into two subcaptions. Achievement covers the technical execution of movement. Effect addresses the artistic impact and design success.

Each judge works independently. They watch the entire show from specific vantage points, usually in the press box or high in the stands. They’re looking at the big picture, literally. Individual mistakes matter less than collective precision and unified execution.

The scoring range runs from 0 to 20 points per judge. Most competitive corps at DCI Finals score between 17.0 and 19.5 in visual ensemble. Tenths of a point separate placements. Sometimes hundredths decide championships.

What Achievement Judges Actually Watch

Achievement judges focus on execution quality. They measure how well performers execute the visual program’s technical demands.

Body carriage comes first. Judges want to see consistent posture across all performers. Shoulders stay level. Heads remain stable. Upper bodies stay quiet while legs do the work. Any bobbing, leaning, or twisting breaks the visual line and costs points.

Foot timing matters enormously. Not just hitting the correct count, but placing the foot at precisely the same moment as everyone else in the form. Judges can see timing discrepancies from 50 yards away. The better corps achieve what looks like a single organism moving together.

Spacing accuracy determines much of the achievement score. Judges evaluate:

- Interval consistency within forms

- Depth alignment in company fronts

- Arc precision in curved forms

- Distance relationships during transitions

Step size consistency affects spacing. If some members take bigger steps than others, forms distort. Judges notice when a block compresses or stretches during movement.

Technique execution includes the fundamental mechanics. The secret to smoother roll steps every visual caption head teaches becomes obvious under competitive scrutiny. Judges watch for proper weight transfer, toe placement, and knee lift uniformity.

“I’m not looking for perfection. I’m looking for a unified approach to the technique. Show me that everyone learned the same vocabulary and executes it with the same priorities.” — Visual ensemble judge with 15+ years experience

The Effect Judge’s Perspective

Effect judges evaluate the visual program’s impact and success. They care less about technical minutiae and more about the overall impression.

Energy projection ranks high. Does the ensemble perform with appropriate intensity for each moment? Can you see the difference between forte and piano sections in their movement quality? Effect judges want to feel the performers’ commitment from the stands.

Dynamic range in movement creates visual interest. Just like music needs loud and soft moments, visual programs need fast and slow, big and small, dense and sparse. Corps that move at one speed or maintain constant spacing throughout a show limit their effect potential.

Visual musicality connects movement to sound. Effect judges notice when the ensemble’s motion complements the musical phrase. They reward moments where visual and musical climaxes align. They penalize disconnect between what they see and hear.

Form clarity at distance matters for effect. Beautiful drill on paper means nothing if judges can’t read the forms from the press box. The best designers create shapes that communicate clearly from 100 yards away while maintaining interesting internal structure.

Staging and framing contribute to effect scores. How does the ensemble use the field space? Do they create depth through layering? Do they frame soloists or featured sections effectively? Judges evaluate these compositional choices.

The Scoring Process Step by Step

Understanding the mechanics helps demystify the numbers on recap sheets.

-

Pre-show preparation: Judges review the corps’ previous performances if available. They note design intent and technical approach. They don’t score based on reputation, but context helps them evaluate growth and achievement level.

-

Real-time evaluation: During the performance, judges watch continuously. They take minimal notes. Most scoring happens through trained observation and comparative memory. They’re building an overall impression while noting specific strengths and weaknesses.

-

Immediate post-show scoring: Within minutes of the final note, judges assign their scores. They consider the entire performance holistically. They compare what they just saw to other corps in the same class and competition.

-

Score justification: Judges must be prepared to explain their scores. They note specific moments that influenced their evaluation, both positive and negative. These comments help corps understand their placement and identify improvement areas.

The comparative nature of judging means scores shift throughout a season. A 17.5 in June might become a 16.8 in August as other corps improve. Judges score against the competitive field, not an absolute standard.

Common Scoring Factors That Separate Tenths

Small details accumulate into scoring differences. Here’s what typically separates corps by tenths of a point:

| Scoring Factor | What Judges See | Point Impact |

|---|---|---|

| Timing precision | Entire ensemble hits counts together vs. scattered placement | 0.2-0.4 per show |

| Form accuracy | Clean geometric shapes vs. warped or fuzzy forms | 0.1-0.3 per show |

| Body alignment | Unified carriage vs. individual variations | 0.1-0.2 per show |

| Transition cleanliness | Smooth form changes vs. visible scrambling | 0.2-0.5 per show |

| Energy consistency | Sustained intensity vs. visible fatigue | 0.1-0.3 per show |

Recovery from errors affects scores differently than the errors themselves. A single performer breaking form costs less if the ensemble maintains integrity. Widespread reaction to one mistake costs more than the original break.

Judges evaluate difficulty in context. A simpler program executed perfectly often scores higher than an ambitious program with visible struggles. The achievement must match the demand.

Design Choices That Influence Ensemble Scores

Visual designers make choices that either support or hinder scoring potential. Smart design acknowledges judging realities.

Move density and pacing matter. Too many transitions prevent achievement of clean forms. Too few transitions limit effect potential. The best programs balance technical demand with performance capacity.

Form vocabulary affects clarity. Simple geometric shapes read better from distance than complex asymmetric forms. However, simple shapes alone don’t generate high effect scores. Designers must balance clarity with sophistication.

Spacing choices create challenges. Tighter intervals increase difficulty but improve visual impact when executed well. Wider spacing provides safety but can look sparse. Championship corps typically use varied spacing throughout their programs.

Phasing and time delays add visual interest but multiply timing challenges. When different sections perform the same movement at different times, every group must execute with precision. Sloppy phasing destroys the intended effect.

How Judges Handle Different Weather and Field Conditions

Environmental factors affect performances, and judges adjust their evaluation accordingly.

Rain changes everything. Wet fields affect marching technique. Visibility decreases. Judges recognize these challenges but still score comparatively. If everyone performs in the same rain, the best execution still wins.

Wind impacts body control and timing. Strong crosswinds make maintaining alignment harder. Judges notice when performers compensate effectively versus when they fight the conditions unsuccessfully.

Field surface variations matter. Some venues have crown or slope. Artificial turf performs differently than natural grass. Experienced judges recognize how field conditions affect execution and consider this in their evaluation.

Lighting affects effect scores more than achievement. Poor visibility limits the audience’s experience, which factors into general effect evaluation. Achievement judges focus on technical execution regardless of lighting quality.

What Judges Notice That Performers Often Miss

Judges see patterns that individual members can’t perceive from inside the ensemble.

Drift happens gradually. A form that starts accurate slowly migrates across the field. Judges watch reference points. They notice when a block shifts five yards over 32 counts even if spacing within the block stays consistent.

Section-specific technique habits become obvious. When the brass section marches differently than the battery, judges see the disconnect. Unified technique training across all sections improves ensemble scores.

Recovery patterns reveal preparation quality. Do performers recover independently or does the ensemble have systems for maintaining alignment? Judges notice whether the ensemble self-corrects or continues with errors.

Attention and focus show visually. Judges can see when performers mentally check out. They notice when energy drops during less demanding segments. Championship corps maintain full commitment throughout entire programs.

Training Methods That Directly Improve Ensemble Scores

Specific practice approaches target the elements judges evaluate most heavily.

Mirror exercises build awareness. When the entire ensemble faces inward and watches each other, timing discrepancies become obvious. Members learn to match not just counts but actual foot placement moments.

Video review from judge perspective helps enormously. Recording from press box height shows what judges see. Ground-level video, while useful for individual technique, doesn’t reveal ensemble clarity issues.

Slow motion repetitions develop precision. Running challenging transitions at half tempo allows members to achieve accuracy before adding speed. Judges reward clean execution at appropriate tempo over fast but sloppy movement.

Silent marching blocks expose timing issues. Without musical reference, performers must rely on visual and kinesthetic awareness. This builds the internal timing that judges reward. Why your upper body carriage is sabotaging your visual score becomes apparent during silent work.

Sectional versus full ensemble balance matters. Too much sectional time creates section-specific habits. Too little prevents detailed work. Championship corps alternate between focused sectional refinement and full ensemble integration.

The Relationship Between Visual and Music Scores

Visual ensemble scoring doesn’t happen in isolation. Judges consider the entire program context.

Visual-musical alignment affects both captions. When movement supports musical phrasing, both visual effect and music effect scores benefit. Disconnect between visual and musical programs limits scoring potential in both areas.

Timing coordination between sections matters. If the visual ensemble hits forms on different counts than the music ensemble hits releases, judges notice the lack of coordination. Both captions suffer.

Program pacing affects judging. A show that builds appropriate visual and musical intensity together creates stronger effect than one where visual and musical energy peaks don’t align.

Mistakes That Cost More Than You Think

Some errors carry disproportionate scoring impact.

Early or late form arrivals destroy the illusion of unity. One person hitting their dot four counts early stands out more than minor spacing inaccuracy. Timing precision matters more than position precision.

Visible confusion during transitions signals preparation gaps. When performers clearly don’t know where to go next, judges question the ensemble’s readiness. Confident execution of slightly imperfect drill scores better than hesitant execution of perfect drill.

Inconsistent performance quality across the show reveals conditioning or mental focus issues. Starting strong but fading in the closer costs effect points. Judges evaluate the entire performance, not just the best moments.

Equipment drops or falls impact visual scores even though they seem like individual errors. The ensemble’s reaction matters. Does everyone maintain focus or do surrounding members break character?

How Scores Evolve Throughout a Season

Understanding score progression helps set realistic expectations.

Early season scores reflect potential versus execution. Judges see what the program could become and score the current achievement level. A corps might score 15.2 in June for a program that will score 18.5 in August.

Mid-season improvements should be steady. Typical improvement rates run about 0.1 to 0.2 points per week in visual ensemble. Faster improvement often indicates early season underperformance rather than exceptional progress.

Late season scoring tightens. As championships approach, the competitive field narrows. Score spreads compress. The difference between first and sixth place might shrink from two points in June to 0.4 points in August.

Plateau periods happen. Sometimes scores stall despite continued improvement. This often reflects the competitive field catching up rather than actual stagnation. Judges score comparatively, not against absolute standards.

Reading and Using Recap Sheets Effectively

Recap sheets contain valuable information beyond raw scores.

Score spreads between judges reveal consistency. When two visual ensemble judges score a corps within 0.1 points, the evaluation is reliable. Spreads larger than 0.3 points suggest the performance had both strong and weak elements.

Comparative placement matters more than absolute scores. If your visual ensemble score drops but your placement improves, you’re progressing appropriately. Focus on beating specific corps, not hitting arbitrary point targets.

Comments provide direction. Judges’ written feedback identifies specific improvement areas. Phrases like “timing in transitions” or “form clarity in opener” pinpoint where work should focus.

Score patterns across captions tell stories. If visual ensemble scores lag behind music scores consistently, the visual program might be too demanding for current execution capacity. Balanced scores across captions indicate appropriate program design.

Preparing for Different Judging Panels

Different judges have different priorities within the established criteria.

Research judge backgrounds when possible. Some judges emphasize technical precision. Others weight effect more heavily. Understanding tendencies helps corps emphasize their strengths appropriately.

Consistency matters more than judge-specific preparation. Corps that execute their program excellently score well regardless of which judges evaluate them. Chasing specific judge preferences often backfires.

Regional versus national competitions use different judge pools. Regional judges might be less experienced or have different backgrounds. Scores at regional events don’t always predict national championship scores accurately.

Putting Visual Ensemble Scoring Into Practice

The scoring system rewards preparation, precision, and performance quality. Judges evaluate technical execution and artistic impact through trained, experienced observation. They compare corps within competitive classes and assign scores that reflect relative achievement and effect.

Understanding how judges score visual ensemble changes how you approach every rehearsal. Focus on the elements judges actually evaluate. Build timing precision and body alignment consistency. Develop dynamic range and energy control. Train the ensemble to perform as a unified body rather than a collection of individuals.

The recap sheet numbers reflect hours of detailed work. Every rep where you match your foot timing to the person next to you matters. Every block where you maintain posture through fatigue counts. Every run-through where you commit fully to the performance contributes to the score a judge will assign on finals night. Take that knowledge back to your next rehearsal and make every repetition count.